Artificial Intelligence

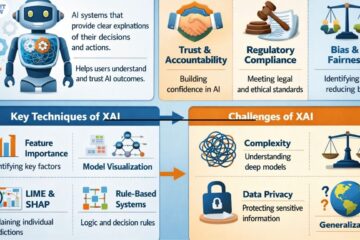

What is explainable AI? importance of transparency, techniques and challenges?

Explainable AI refers to methods and techniques that make the decisions and outputs of AI systems understandable to humans. Instead of being a “black box”, an explainable model shows why and how it reached a particular conclusion. What is Explainable AI? Explainable AI is a subfield of artificial intelligence focused Read more…